- A/B Testing

- CRO

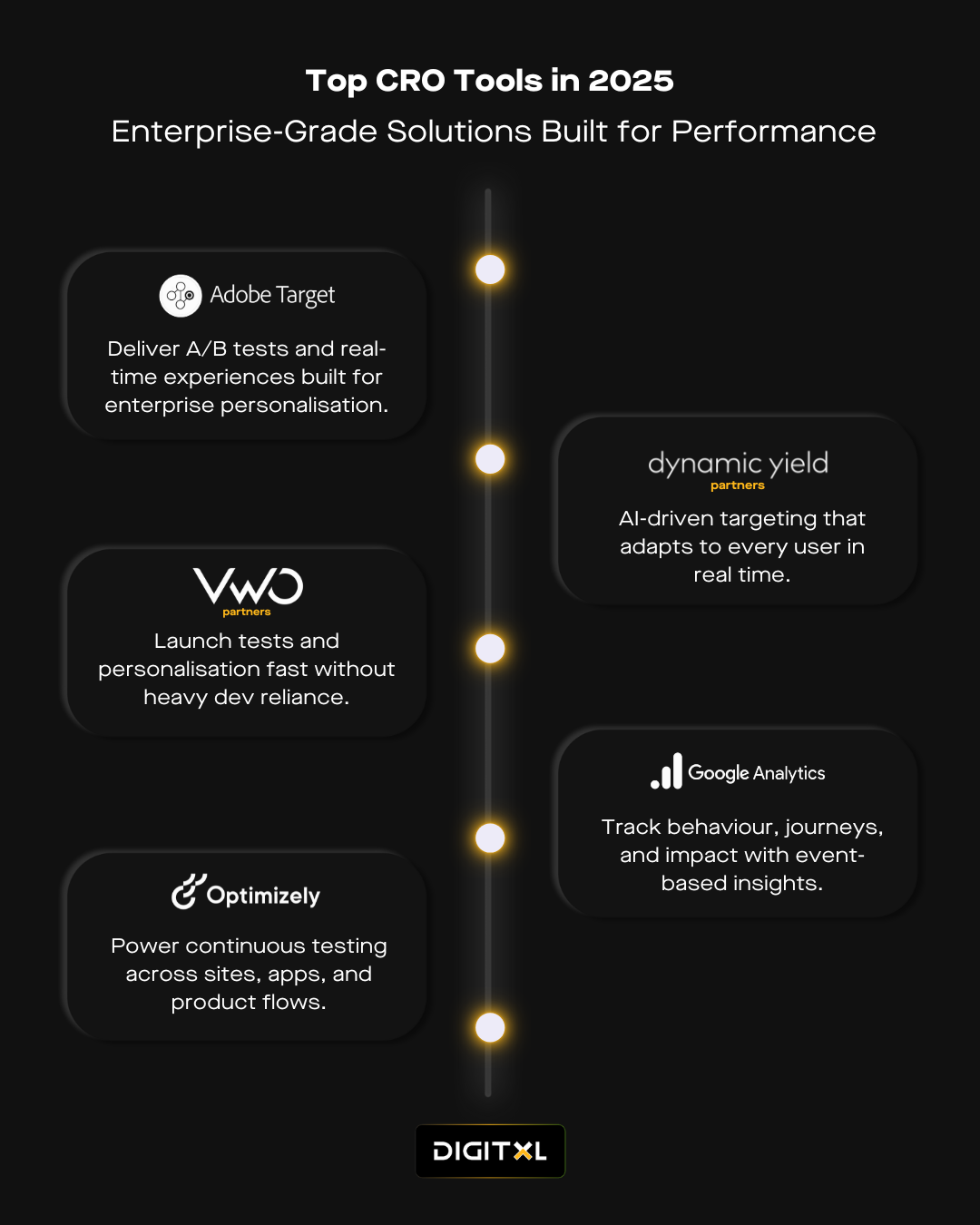

What 2025’s CRO Stack Actually Looks Like

25 Jun 2025

CRO sits inside decisions that already exist campaigns, checkouts, journeys, permissions. The platforms that support it aren’t selected for features. They’re chosen because the teams using them (and often their CRO agency) trust what they surface, and act on what they show.

The most effective enterprise CRO tools aren’t picked to impress procurement. They’re kept because they hold under pressure – legal reviews, overlapping channels, unclear attribution, performance drift. They reduce friction between seeing a problem and resolving it.

1. Tools Overview

2. Adobe Target

Built for performance at scale.

Adobe Target continues to anchor CRO programs that operate across multiple brands, channels and internal teams. It handles complex experience logic without breaking compliance frameworks or marketing momentum. In 2025, it remains one of the most powerful personalisation and experimentation tools for enterprise especially when time-to-launch and confidence in audience logic are equally important.

Where it shows up:

- Experience testing with strict legal requirements

- High-traffic retail and financial UX

- Activation tied to Adobe Analytics and Journey Optimiser

3. VWO

For teams who move fast and test often.

VWO fits into CRO programs that are marketing-led but operationally mature. It gives teams the control to observe user behaviour, roll out variations, and build hypotheses using the same platform. For companies designing a CRO stack for digital teams that operate with constrained developer cycles, VWO keeps experimentation visible and active — not ad hoc.

Where it shows up:

- Product launches where speed of iteration matters

- Campaign teams owning their own performance testing

- UX tweaks running in parallel to design systems

4. Optimizely

When testing moves beyond UI changes.

Optimizely supports experimentation at a different layer. Its Full Stack platform gives engineering and product teams the ability to manage experiments as part of application logic — not just interface edits. It’s often compared with Adobe Target for enterprise rollouts, but the use cases tend to diverge.

Where it shows up:

- Backend testing tied to feature flags

- Development environments with test gates

- Governance-led product experimentation

(Also frequently evaluated in Adobe Target vs Optimizely RFPs)

5. Dynamic Yield

Personalisation that adapts to live context.

Dynamic Yield is chosen for its ability to handle high-velocity segmentation and context shifts — without constant manual rule updates. It helps unify experience logic across web, mobile, and in some cases, point-of-sale or kiosk. In the context of modern personalisation and experimentation tools, it enables teams to update logic at pace without creating gaps in brand or flow consistency.

Where it shows up:

- Real-time content and product recommendations

- High-frequency retail or hospitality use cases

- Travel journeys with fluctuating availability

6. Google Analytics 4 (GA4)

The behavioural reference point.

Most teams aren’t relying on GA4 for answers — they’re using it to validate the questions. Its event-based model gives analysts a cleaner way to connect outcomes to behaviour across multiple sessions and devices. GA4 underpins most conversion rate optimisation platforms in 2025, even if the frontend tests are being run elsewhere.

Where it shows up:

- Mapping behavioural signals to funnel outcomes

- Understanding drop-offs by intent stage

- Pulling consistent metrics into BigQuery or Looker Studio

7. Microsoft Clarity

Visual feedback without lift.

Clarity works well for teams that need immediate visibility into friction — without setting up another analytics property. It’s often installed alongside GA4 or VWO, and gives design and CRO leads fast visual signal to support or reject a proposed test. It doesn’t drive the test strategy — it sharpens it.

Where it shows up:

- Form drop-offs without clear reason

- Page or element hesitation not reflected in event data

- Secondary validation when AB test data is ambiguous

8. The Thread Across All Six

These tools are often used together, not because of feature gaps, but because each holds a distinct position in the workflow. Target sets the logic. VWO tests the shift. Optimizely carries it deeper. Dynamic Yield maintains consistency. GA4 confirms the pattern. Clarity explains the hesitation.

What connects them isn’t their category. It’s their role in reducing ambiguity. Teams keep them because they simplify decisions that would otherwise stall.

9. Final Frame

Strong CRO programs operate with less friction. Insight moves quickly. Decisions hold. The stack isn’t the story, it’s the infrastructure that lets teams focus on what changed, and what to do next.